Join us to debate the role internet platforms like YouTube should play in setting free speech agendas in your country, your language and across the world. Online editor Brian Pellot kicks off the discussion.

The fact that Google, which owns YouTube, has voluntarily blocked the Islamophobic video clip Innocence of Muslims in Egypt and Libya has opened a spirited debate. Where YouTube is localised with country-specific versions of the site, Google routinely accepts government requests to restrict local access to content that clearly violates local laws. Google has restricted access to Innocence of Muslims in Saudi Arabia, Jordan, India, Indonesia, Malaysia and Singapore on these grounds. But for Google to preemptively restrict access without appeal from the Egyptian or Libyan governments based on what it decided were “very sensitive situations” in the form of violent protests is unprecedented and indeed troubling.

Free Speech Debate’s sixth draft principle says, “We neither make threats of violence nor accept violent intimidation.” Deadly demonstrations around the world, ostensibly fueled by outrage over Innocence of Muslims’ denigrating portrayal of the Prophet Muhammad, have been used to pressure governments, internet service providers and Google to block the video. Violent attacks are unjustifiable in any circumstance and must obviously be condemned, but caving in to violent intimidation can also be dangerous.

Days after US ambassador to Libya J. Christopher Stevens was killed in a Benghazi attack purportedly stemming from outrage over Innocence of Muslims, the White House and Australia urged Google to review the video against the company’s terms of service, a request Google denied. The company had already reviewed the video and publicly declared that it neither violated its terms of service nor constituted hate speech because it did not directly incite violence. No reference to violence or incitement is mentioned in YouTube’s terms of service, but the site’s community guidelines say, “we don’t permit hate speech (speech which attacks or demeans a group based on race or ethnic origin, religion, disability, gender, age, veteran status, and sexual orientation/gender identity)”.

The distinguished American legal scholar and First Amendment expert Robert C. Post rightly argues that Innocence of Muslims, which I link to here, does not “attack” a group based on religion. But it does seem to “demean” Muslims and their faith. With YouTube’s community standards in mind, it is unclear why the video has not been removed or at least marked as offensive.

This video of a bishop claiming no Jews died in gas chambers during the Holocaust is preceded on YouTube by a message that reads, “The following content has been identified by the YouTube community as being potentially offensive or inappropriate. Viewer discretion is advised.” This warning likely resulted from viewers flagging the video as inappropriate under the “Hateful or Abusive Content: promotes violence or hatred: religion” tag. According to YouTube’s Hateful Content policy, “if a video that you have flagged or comment that you have reported hasn’t been removed, it’s because it doesn’t violate our hate speech policies”. As many viewers clearly find Innocence of Muslim’s message offensive and hateful, the absence of a warning at its start seems puzzling. It should, however, be noted that videos other religions might find comparably offensive have also not been removed from YouTube or branded “potentially offensive”. Christians could be equally outraged by this video of Jesus singing I Will Survive dressed only in a nappy and then getting run over by a truck, but the video remains accessible—with 10 million views and no warning of offence.

More startling than these apparent discrepancies has been Google’s near silence about its decisions. As of September 26, two weeks after the US ambassador was killed in the first round of protests over the video, not a single mention of Innocence of Muslims had appeared on Google’s official blog, public policy blog, or YouTube blog. The company did, however, issue several statements to the press.

On September 15, a statement was released saying: “We work hard to create a community everyone can enjoy and which also enables people to express different opinions. This can be a challenge because what’s OK in one country can be offensive elsewhere. This video—which is widely available on the Web—is clearly within our guidelines and so will stay on YouTube. However, we’ve restricted access to it in countries where it is illegal such as India, Saudi Arabia and Indonesia as well as in Libya and Egypt given the very sensitive situations in these two countries. This approach is entirely consistent with principles we first laid out in 2007.” These principles make clear the complex and challenging decisions Google faces regarding free expression and controversial content online. The 2007 post also explicitly states, “Google is not, and should not become, the arbiter of what does and does not appear on the web.” Is that not precisely what the company did when it voluntarily blocked Innocence of Muslims in Libya and Egypt?

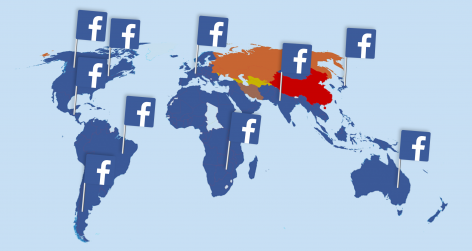

As of September 26, requests had been received from governments to review or block Innocence of Muslims in 21 countries including those already mentioned. In Pakistan, Bangladesh and Afghanistan, where YouTube is not localised with a country-specific version of the site, Google rejected removal requests. The governments of those countries responded by blocking YouTube entirely. Bahrain, the UAE, Sudan and Kyrgyzstan all blocked Innocence of Muslims without even submitting a takedown request to Google. The Maldives, Brunei and Russia have also threatened to block the video, and an Arab-Israeli political party requested it be censored locally. Please add the latest updates from your country in the comment thread below.

Jillian C. York argues, “Although restricting the video in [Egypt and Libya] might seem tempting in the wake of the horrific violence that occurred in Libya, it is in the best interest of neither the company nor, arguably, the citizens of those countries for Google to be the arbiter of acceptability.”

Google capitulating to violent intimidation in Egypt and Libya could potentially save lives in the short term, but it could also set a dangerous precedent, opening a Pandora’s box of grievances every time controversial content is posted and someone or some group takes violent offence. This could in turn lead to greater censorship and ultimately greater violence if people decide that killing is the most effective way to air their grievances and win their way.

Brian Pellot is online editor at Free Speech Debate.

reply report Report comment

The story is a slightly more relevant take on an absurd phenomenon. What I have found more interesting is our apparent lack of awareness to the fact that we are automatically drawn into the debates on subjects that have been emphasised by mass media. Of course you would think this understandable and acceptable if you believed the media to be a benevolent force of pure truth. My point here is that free speech is limited to what we know and is therefore currently not free at all. We live in a speech oligarchy where the likes of Rupert Murdoch control what we talk and think about.

reply report Report comment

In response to the question above my view is that using the film maker’s apparent breach of probation was an expedient ( and for the authorities somewhat lucky) means of appearing to act against the offending party, whilst at the same time being able to justify their actions to another audience as being nothing more than a routine observance of Local law. In the first instance it may go a little way towards appeasing the calls for blood but I think a valuable opportunity has been missed for a liberal democracy to stand up to theocratic bullying and to draw a real line in the sand to show where liberal secular values lie. This is set in the context of a broader gathering storm about the secularity of societies. There must therefore have been a ‘public good’ argument for resisting the calls to arrest the film maker on the basis that events as theybhave come to pass will have sent a signal, however ambiguous, that a democratic state will acquiesce to external violent pressure. There was surely a moral duty on behalf of the authorities to protect freedom of speech but even more importantly to ensure the protesters did not believe that their violent actions could influence the response of the State. This is akin to the payment of ransomed to kidnappers. The relatively trivial issue of a probation transgression could have been dealt with later when the dust had settled.

reply report Report comment

Update: Google lifts censorship of IOM in Libya and Egypt:

http://www.tnr.com/blog/plank/108233/youtube-unblocks-incendiary-video-temporary-censorship-still-censorship

reply report Report comment

A lot of very interesting comments so far! Here’s another question for you – Do you think the filmmaker behind Innocence of Muslims was jailed for probation violations or because of the unrest IOM sparked around the world?

The LA Times frames this as a question of free speech here:

http://www.latimes.com/news/local/la-me-filmmaker-20121003,0,4693181.story

Feel free to chime in below, respond to the thread above or comment directly on my post.

reply report Report comment

Russia, where the official religion is Orthodox Christian, is keen to pass a new set of laws, protecting the fragile “feelings of the believers”, and it seems that an offence to any religion, made by anyone anywhere is welcome as a precedent. The case against “Innocence of Muslims” in Moscow was started by Ruslan Gattarov, MP from Southern Urals and a member of the information policy Committee of Russian Federation. Yesterday, according to Gattarov, the video was given an “extremist” status by a court in Grozny (Chechnya); and Gattarov commented on the court’s decision as being “absolutely correct” and later twitted that there is no need to worry as Google will have to remove it. Gattarov is supported by the Russian Federal Surveillance Service for Mass Media and Communications, who forbid the Russian media to describe the content of the video so the “feelings of the believers are not offended”. However, yesterday the General State’s attorney too issued a statement which claimed that the video “had not yet been assigned an “extremist” status, the court in Grozny had only issued a recommendation to do so as a restrictive measure against its viral spread; the final decision has not been made yet”. At the time of writing the video is available to watch and is offered by a few independent Russian resources to download. The hearing in Moscow is scheduled on 1st October. An interesting twist on the situation is the appearance in the media of articles, which link the case of “Innocence of Muslims” to the case of Pussy Riot and claim that if the video is banned, it would prove to those still in doubt that the verdict, given to Pussy Riot, was also “absolutely correct”.

reply report Report comment

The Innocence of Muslims has been banned in Russia and the full version removed from YouTube. The fragments, dubbed in Russian, are still available to watch with the following disclaimer “This video contains material that may offend the feelings of believers. The authors of the translation respect the religion and do not wish to offend people who watch this video. This fragment is for the educational purpose only, so people can see for themselves why the Muslims around the world protested”.

reply report Report comment

The Holocaust was the industrial murder of millions of people. We do not permit denial and insist on its being remembered to make sure nothing like it happens again. It is not comparable with a foolish film on YouTube which no-one is compelled to view.

All of us could if we searched find something deeply offensive to us on the internet or in the press. But we do not do so and if we come across such an item we do not react with violence because we accept that we have no right not to be offended and indeed know that being offended is an uncomfortable but unavoidable part of life we must bear without violence for the sake of the communal integrity and peace and freedom. We know there are political or legal means to address such issues.

Muslim leaders should reflect on the upbringing that produces a sense of entitlement to such violent responses that are totally disproportionate to any real harm done and realise that freedom of expression in the entire world cannot be controlled by displays of irrational violence. Far from instilling terror and respect these reactions bring disrespect, ridicule and contempt on their faith because many people see them as weak and childish.

Intimidatory violence must never be rewarded, least of all by censorship,

reply report Report comment

The reason why the example of holocaust has become recurrent to highlight selective free speech in west is because it is the only thing that pokes Western mind the same way as insulting the prophet Muhammad is the easiest way to enrage millions of Muslims. The philosophy of both these sides seems to hit the adversary where it hurts the most. When someone suggests that the Muslims should become desensitized about the derisive content on Muhammad they are simply unable to understand the world view of nearly 1.6 billion Muslims. Similarly, the Muslims with their roots in Asia and Africa are unable to understand Europe’s policies towards genocide as they did not live through the horrors of genocide like Europe did.

report Report comment

Thank you Farooq for highlighting this extremely important point. The French philosopher Pascal Bruckner was explaining how we created in the West a certain scale when it comes to Genocides in which the Holocaust stands at the highest possible stage and the other genocides (Rwanda, Armenia, Gulag, etc.) follows behind. By establishing such a ranking, we imply that no genocide could reach such a level of atrocity as in the Holocaust, which is per se a good thing. Yet, we need to be very careful not to imply as well that because nothing can reach the top of the scale, nothing deserves as much attention or action.

If some Muslims are definitely unaware of what happened during WWII, many others understand it very well, but what they do not understand, and what we should all ask ourselves, is the following: are we remembering the Holocaust so that this very extreme case of genocide does not happen any more again, or are we doing so to prevent any racial, ethnic and discriminatory politics to rise again and be used against any human beings? Even though we call it the World War, for many people on this planet this war does not have the same meaning or significance, and yes, some might be more offended by publication of caricatures of their beloved prophet than they are about the publication of Mein Kampf; because these caricatures hurt them directly, they do not need to take a history class on Western history to understand their offensive character. Are we, in Europe, able to understand the atrocity of the Holocaust even if we have no connection with it whatsoever, but unable to feel similar empathy for modern-day issues, because they are not as atroce?

Hence, this idea of scale when it comes to genocide or freedom of expression is problematic. If we want to keep such a scale (why not?), we still need to be aware that it is a Western perspective and millions of people might disagree with our ranking.

reply report Report comment

@Brian: My sense is that such a suit would have a better chance in (continental) Europe, where we have so-called portrait rights as part of copyright law.

reply report Report comment

A judge in Los Angeles denied a request to remove IOM from YouTube. The request was made by Cindy Lee Garcia, who acted in the film and claimed she was misinformed and misled about its actual content.

http://latimesblogs.latimes.com/lanow/2012/09/judge-innocence-of-muslims-youtube.html

reply report Report comment

This is interesting. Does anyone else find it strange that there are voice-overs in the film whenever Mohammed or relations to Mohammed are mentioned? If the actors were led to believe by the producers that this project was something different, I would start to have second thoughts about the extent to which the producers are “champions” of free speech.

reply report Report comment

Many years ago, I asked my Spiritual Master,” All the chaos in the world- who is responsible for it?”

The Master replied,” 2 classes of people who live by the philosophy of divide and rule – one is politicians and the other preachers.”

We should be aware and wary of these 2 classes of people.

reply report Report comment

I think the best way to think about this to analogise from the law on on-line torts. Most countries have so-called “safe-harbour” provisions that protect internet service providers and other companies, including Youtube, from getting sued, as long as they respond to valid takedown notices and – and this is important here – as long as they have no actual control over their content. The more the website shapes what people write, the more likely it is that they can be sued. (There is a famous case about discrimination on Craigslist, in the housing section.)

This is an approach that makes sense. If a company voluntarily takes on the task to censor and shape what is written on its website, people should be able to sue it, but they should be able to opt-out of that responsibility in those cases where the volume of traffic, etc. is such that they cannot reasonably be asked to filter everything that is written/posted.

Applying this to the present controversy, the answer is that Youtube should be asked to follow its own terms of usage, which is in fact what the US government has done. The content of those terms of use, however, are none of the government’s business. As it happens, they are already much too restrictive for my taste, banning all sorts of things that puritan Americans don’t want to see, but that is strictly a matter for Youtube to decide. It is not OK to hold them responsible for something that is posted on their website if they haven’t promised in advance that they would filter out such content. In this case, Youtube has declared that this clip is consistent with their terms of use, and that is that.

The result of this approach is that in most cases websites like Youtube and Facebook will become public fora where people can post what they like. And that is all the better. We need such places on the internet. Given that such content is only found by people who actively go looking for it, I really don’t see the problem.

reply report Report comment

In the Maldives, the government has officially banned the film and is trying to remove access to it on YouTube. The Communications authority has said that blocking YouTube altogether was “not practical”. There was a small protest outside of the UN building in Male’ where a US flag was burned. Some protesters bought their children carrying plastic AK47s. It was fairly low-key though and more a show of solidarity than a angry demonstration.

reply report Report comment

Thanks for this, great article.

As the author points out, it seems to be difficult to work out whose ethical assessment to use for the question of whether the video should be online. There are 1) the producers of Innocence of Muslims; 2) Google; 3) governments around the world; and 4) Muslim protesters. If I had to go with one, I would choose governments, especially if they are democratically elected. Google’s “hate speech” community guideline has all the contradictions of defining who is a minority and who is not, and comes across as too Western for such a global debate. The good thing about governments is that I can always revolt. Google is further away.

reply report Report comment

Sir: The bad thing about governments, especially those which have not been democratically elected, is that they can always ignore the revolt and carry on regardless. Google lives and dies by its IP and Digital Millennium Copyright Act, and these non-democratic governments, live as they please and only die when forcibly removed. I personally would pick the side of Google in this debate, as it seems to me that this would be the side of the law.

report Report comment

Whose law?