Internet Service Providers do not merely route data packets from end-to-end, but are heavily involved in monitoring their customers’ online activities. Ian Brown discusses the implications of Britain’s suggested “voluntary” opting out of “adult content”, with little parliamentary and court involvement.

The evolution of Internet technology over the last decade has enabled Internet Service Providers (ISPs), both in pursuit of profit, and under government pressure, to move away from their previous function of “mere conduits”. Rather than simply routing data packets from one end-point to another – the famous end-to-end model of the Internet’s designers – ISPs have been doing more monitoring and blocking of their customers’ online activities. While this is often in pursuit of widely agreed social goals – particularly, reducing the abuse of children – these technical changes significantly affect Internet users’ freedoms.

Edward Snowden revealed just how far this trend has enabled NSA and GCHQ to access individuals’ digital traces. While much of this surveillance has been done by intelligence agency monitoring of data flows, traffic analysis done by ISPs themselves is more cheaply and scalably available to intelligence and policing agencies. This is one reason why the UK government continues to push for new data retention laws, despite the Court of Justice of the European Union¹s judgment invalidating the Data Retention Directive.

Blocking technologies often generate logs of all users that attempt to connect to blocked content. Following political pressure, UK ISPs are now asking all customers whether they want “adult” content blocked. Given the breadth of categories affected, this will significantly impact on the privacy as well as the right to receive information of a significant percentage of the UK population. Across the main ISPs, categories being blocked include sex-related material (including sex education and gay and lesbian sites), violent material, “extremist related content”, “anorexia and eating disorder websites”, “suicide related websites”, “alcohol”, “smoking”, “web forums”, “esoteric material”, and “Web blocking circumvention tools”.

Who decides what should be blocked is a critical question. The most legitimate decision-makers from a human rights perspective are parliamentarians and the courts. But most blocking is done on a “voluntary” basis, following informal pressure on ISPs and websites. BT’s Cleanfeed system can be traced back to threats in the 1990s by the Metropolitan police to seize ISP servers. Ministers gave regular speeches threatening action against ISPs until they were satisfied with the level of blocking of sites on the industry-funded Internet Watch Foundation (IWF) list.

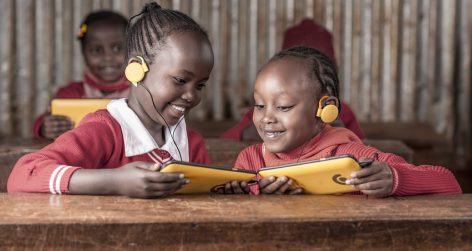

Pages are added to this list following assessment by IWF staff of the criminality of online images. But site publishers are not notified when they are added to this list, and few ISPs explicitly notify users when they attempt to access blocked content, limiting the ability for specific blocks to be challenged. One reason the blocking of a Wikipedia page got so much media attention in 2008 was that so few people realised this process existed. Internet users themselves will be able to opt out of the UK’s “adult” filters, and specific blocking categories. But we see that this has been difficult in practice for customers of mobile phone networks that have blocking “adult” content for a longer period of time.

While it will be relatively straightforward for older children to circumvent blocks – by sharing material with friends, using VPNs, or tools like TOR – this might be a step too far for some groups, like gay teenagers, who have benefited the most from the ability to get information and participate in online communities away from sometimes unsympathetic home environments. This ability can very supportive of teenagers’ mental health, and their ability to develop their identity and personality.

Greater use of anonymisation services will not be welcomed by the intelligence and law enforcement agencies. MI5 apparently lobbied about this concern during the passage of the Digital Economy Act. The government has chosen since then not to bring into effect that Act’s provisions for blocking of copyright infringing sites. This might lead the government to encourage ISPs over time to try and block these anonymisation services.

Debates over technical measures to block young Internet users’ access to online pornography need to take into account these intended and unintended effects, if they are to genuinely protect individuals while minimising negative impacts on human rights.

Ian Brown is the author (with Chris Marsden) of Regulating Code: Good Governance and Better Regulation in the Information Age.